-

How to define the hottest ai startups in 2026

- 1. Fast adoption and early traction from real-world usage

- 2. Funding momentum and recent investment signals

- 3. Product differentiation that creates new categories

- 4. Enterprise pilots, partnerships, and early customer engagement

- 5. Ecosystem visibility with developers, operators, and buyers

- 6. Experimentation culture that iterates quickly without legacy drag

- 7. Silicon Valley “hot” signals: Bay Area HQ, post-2020 founding or AI pivot, and product-market fit indicators

- Quick Comparison of hottest ai startups

-

Top 30 hottest ai startups to watch in 2026

- 1. Listen Labs

- 2. Gladia

- 3. Rilla

- 4. Blossom

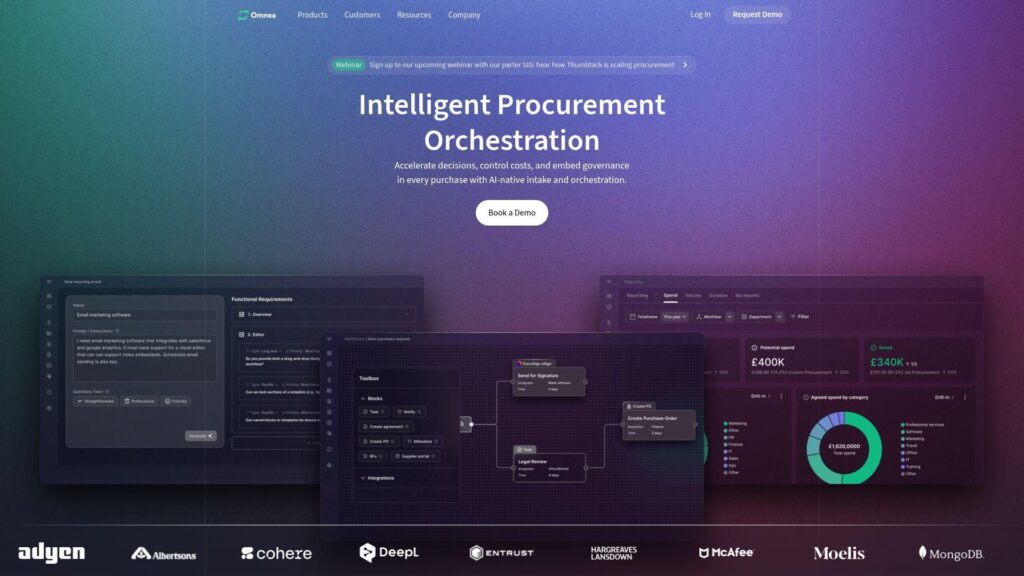

- 5. Omnea

- 6. Adaptive Security

- 7. Avoca

- 8. Traba

- 9. Harmonic

- 10. Ambience Healthcare

- 11. Tennr

- 12. XBOW

- 13. OpenRouter

- 14. Harvey

- 15. Vivodyne

- 16. Abridge

- 17. Glean

- 18. Prepared

- 19. Mashgin

- 20. Grammarly

- 21. 6sense

- 22. Checkr

- 23. Textio

- 24. Dataiku

- 25. AlphaSense

- 26. Labelbox

- 27. Ironclad

- 28. Fictiv

- 29. Akkio

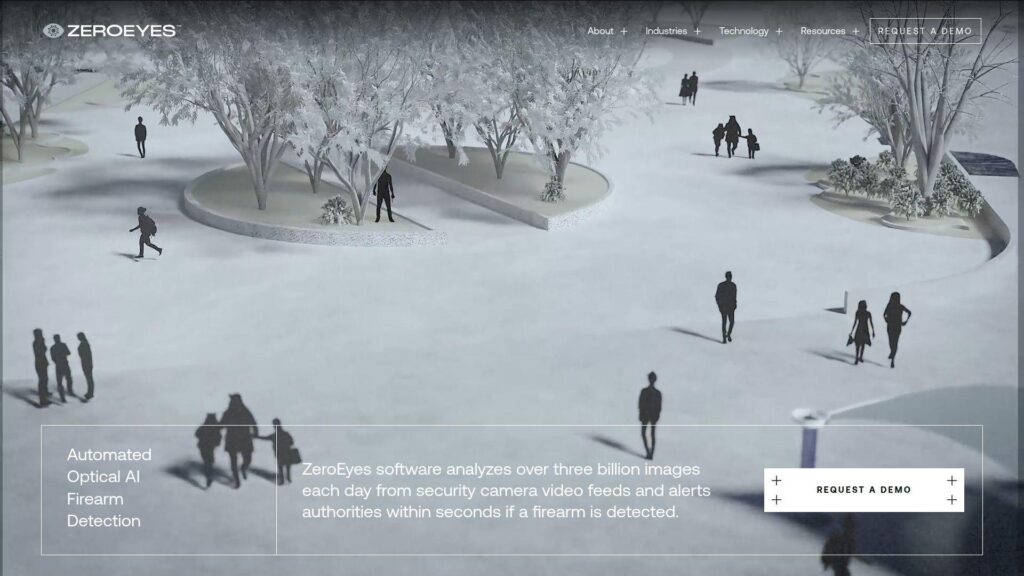

- 30. ZeroEyes

-

Trends defining the next wave of hottest ai startups

- 1. Explosion of AI-native companies built with AI at the core

- 2. Lower barriers to building AI products with modern tools, frameworks, and cloud access

- 3. Vertical AI specialization across healthcare, finance, logistics, and legal

- 4. Shift from AI tools to AI coworkers: agents, copilots, and autonomous systems

- 5. Faster go-to-market cycles with rapid launches and iterations

- 6. Enterprises testing startups earlier to accelerate adoption and visibility

- 7. Hardware meets intelligence: infrastructure pressure from larger models and scaling demands

- 8. Enterprise collaboration: co-building with startups to bridge legacy systems and innovation

- 9. Ethical AI and transparency: bias reduction, data auditing, and accountability expectations

- 10. Talent redistribution as researchers and engineers move from big tech to startups

- 11. GenAI moving into production environments as enterprise adoption accelerates

-

Funding, valuation, and traction signals behind hottest ai startups

- 1. Ranking signals used by curated lists: valuation, funding, and growth

- 2. Funding stages as a shorthand for maturity: Seed through late-stage rounds

- 3. Investor patterns shaping the hottest ai startups ecosystem

- 4. Corporate participation and strategic alignment with major cloud and AI platforms

- 5. Channel, reseller, and partner programs as distribution accelerators

- 6. Traction indicators: early adoption, enterprise renewals, and real usage outcomes

- 7. Hiring momentum as a proxy for scaling and go-to-market readiness

- 8. From hype to product-market fit: what “hot” means after 2023 to 2024

-

Hottest ai startups by category and real-world use case

- 1. Enterprise search and knowledge assistants for internal productivity

- 2. Legal AI platforms and contract copilots for professional services

- 3. Healthcare AI copilots for documentation and clinical workflows

- 4. Speech-to-text, transcription, and voice interfaces as API-first products

- 5. Cybersecurity and data security posture management powered by AI

- 6. Public safety and emergency response AI for call handling and triage

- 7. Creative AI for video generation, 3D capture, and voice synthesis

- 8. Developer tools and coding assistants for building AI applications faster

- 9. Back-office agents for procurement, finance, and operational automation

- 10. Autonomous market research and customer insights platforms

-

Where the hottest ai startups are being built: Silicon Valley and beyond

- 1. Silicon Valley as an AI hotbed fueled by talent density and big-tech adjacency

- 2. Bay Area AI company mix: enterprise automation, healthcare AI, and developer platforms

- 3. Silicon Valley startup examples: Moveworks, Tempus AI, Voyage, Cruise, Plus.ai

- 4. New York as a major center for enterprise AI, sales, and healthcare innovation

- 5. Global hotspots alongside the U.S.: Paris and London in curated AI startup lists

- 6. Remote-first teams and distributed HQ models for AI startups

- 7. Using startup directories to source partnerships, talent, and competitive intel

- 1Byte cloud computing and web hosting for AI startup teams

From 1Byte’s vantage point, “hot” is not a vibe. It is measurable behavior in production. We watch what founders deploy, what operators harden, and what buyers renew. The pattern is consistent. Teams that ship agents, secure data, and prove outcomes keep compounding.

McKinsey estimates $2.6 trillion to $4.4 trillion in annual GenAI value; Gartner forecasts $723.4 billion in 2025 public cloud spend; Deloitte expects 25% enterprise adoption expected by 2025 for AI agents.

Two “real-world” signals matter to us more than any demo. First, buyers are moving from chat to workflow. Second, infra teams are budgeting for inference like they budgeted for databases. Klarna-style customer support automation and Morgan Stanley-style knowledge retrieval are now normal ambitions. That change is why we built this watchlist like operators, not spectators.

Our goal here is practical. We want a list we would actually partner with, integrate with, or host. We also want criteria you can reuse on your own pipeline. That is how “hottest” becomes actionable.

How to define the hottest ai startups in 2026

1. Fast adoption and early traction from real-world usage

Adoption is the first hard signal. We look for usage that survives the second week. Stickiness shows up as repeat prompts, repeat workflows, and repeat API calls. The strongest teams design for real operators. They ship admin controls, audit logs, and sane defaults.

In our experience, “fast adoption” rarely comes from a flashy model. It comes from removing friction in one job role. Helpdesk automation, clinical notes, and contract review are popular for a reason. Each maps to a daily, repetitive pain.

2. Funding momentum and recent investment signals

Funding is not quality, yet it predicts runway. We treat it as a constraint check. A serious training plan needs capital. A serious go-to-market plan also needs capital.

Momentum is more than round size. We track investor “stacking” across stages. We also watch who joins the board. When specialist investors show up, diligence is usually deeper.

Still, we prefer teams that can earn their next milestone. Durable companies tie spend to shipped capability. They do not outsource belief to headlines.

3. Product differentiation that creates new categories

Many AI products are wrappers. The hot startups create a category boundary. They make a workflow possible that felt impractical before. That is a sharper form of differentiation.

We test differentiation with an operator question. What can this product do without heroic setup? If the answer is “a lot,” we pay attention. If the answer is “it depends,” the product is early.

Category creation also shows up in language. Buyers start using the startup’s words. That is a subtle but powerful moat.

4. Enterprise pilots, partnerships, and early customer engagement

Enterprise pilots are where AI dreams meet ugly data. Hot startups expect that collision. They build connectors, identity hooks, and governance surfaces early. They do not wait for “later.”

Partnerships matter when they unlock distribution. Cloud marketplaces help. SIs help when deployment is complex. OEM deals help in physical AI. Each is a force multiplier if execution is disciplined.

We also watch how founders talk to customers. Great teams run pilots like product research. They do not treat pilots like theater.

5. Ecosystem visibility with developers, operators, and buyers

Ecosystem visibility is not vanity metrics. It is proof that practitioners care. Developers show it via SDKs, templates, and active repos. Operators show it via runbooks, logs, and clear failure modes.

Buyers show it via shortlists. A startup becomes “hot” when procurement hears the name repeatedly. That often happens before public press catches up. We see it in integration requests and security questionnaires.

Healthy ecosystems also create third-party content. Tutorials, connectors, and community answers are compounding assets.

6. Experimentation culture that iterates quickly without legacy drag

AI startups win by learning faster. Experimentation culture is the engine. We like teams that can ship weekly without breaking production. That usually implies strong internal tooling and clear ownership.

Legacy drag shows up as slow releases and brittle integrations. Hot teams avoid that. They build modular systems. They isolate prompts, tools, and policies. That makes change safer.

Culture also shows in incident response. Mature teams publish postmortems internally. They treat failures as product inputs, not blame events.

7. Silicon Valley “hot” signals: Bay Area HQ, post-2020 founding or AI pivot, and product-market fit indicators

Silicon Valley signals are imperfect, yet useful. Dense talent markets accelerate iteration. Nearby customers accelerate pilots. Bay Area adjacency also increases “serendipity throughput.”

Post-pivot stories matter too. Teams that reoriented around AI often bring domain context. That context prevents “demo-first” product design. It can also speed compliance readiness.

Product-market fit indicators are still the north star. We look for narrow use cases with expanding scope. That expansion is a strong clue of real pull.

Quick Comparison of hottest ai startups

We built this comparison for busy operators. It is a short table, not a verdict. Pricing and trials change quickly. We focus on what the tools are really for.

| Tool | Best for | From price | Trial/Free | Key limits |

|---|---|---|---|---|

| Glean | Enterprise knowledge search | Contact sales | Pilot-based | Connector coverage varies |

| Hebbia | Research over messy docs | Contact sales | Demo-led | Best with curated sources |

| Harvey | Legal workflows | Contact sales | Controlled rollout | Jurisdiction constraints |

| Abridge | Clinical note automation | Contact sales | Pilot-based | Integration workload |

| Deepgram | Speech-to-text APIs | Usage-based | Free tier | Audio quality sensitivity |

| ElevenLabs | Voice synthesis | Usage-based | Free tier | Brand safety controls |

| Wiz | Cloud security posture | Contact sales | Demo-led | Cloud scope required |

| Cyera | Data security posture | Contact sales | Demo-led | Deep data access needed |

| Cursor (Anysphere) | AI coding in IDE | Paid plans | Free option | Policy controls vary |

| Runway | Video generation workflows | Paid plans | Limited free | Output consistency limits |

Below is our full watchlist. These are the names we keep hearing in buyer calls, founder chats, and operator war rooms. Several are infrastructure-first. Others are vertical copilots. A few are “agent platforms” that want to become default work surfaces.

- Anthropic — safety-minded frontier models for enterprise use

- Mistral AI — efficient models and open-weight momentum

- Cohere — enterprise-grade LLM platform focus

- xAI — fast iteration on large-scale model capability

- Together AI — open model hosting and fine-tuning stack

- Perplexity — search-native answer experiences

- Glean — internal knowledge discovery with enterprise connectors

- Hebbia — analyst workflows across messy documents

- Writer — governed enterprise content and agents

- Sierra — customer service agents with workflow control

- Cognition — agentic coding and task execution

- Anysphere (Cursor) — IDE-native coding acceleration

- Replit — AI-native developer environment

- Codeium — developer copilots and enterprise controls

- LangChain — agent frameworks and orchestration primitives

- LlamaIndex — data-to-agent indexing and retrieval tooling

- Pinecone — managed vector search for retrieval systems

- Weaviate — vector database with hybrid retrieval

- Arize AI — model observability and evaluation workflows

- Wiz — cloud risk detection with fast inventory mapping

- Cyera — data posture management for sensitive estates

- HiddenLayer — ML model security and threat defense

- Harvey — legal copilots built for firms

- Spellbook — contract drafting and review assistance

- Abridge — clinician-first ambient documentation

- Hippocratic AI — healthcare-focused agent direction

- Ambience Healthcare — clinical workflow automation and notes

- Deepgram — speech recognition APIs at scale

- ElevenLabs — expressive voice synthesis for products

- Runway — creative generation for modern media pipelines

Top 30 hottest ai startups to watch in 2026

Our picks skew outcome-first. We look for teams shipping product that changes a daily workflow, not just a clever demo. Each tool gets a weighted score on seven criteria: value-for-money (20%), feature depth (20%), ease of setup and learning (15%), integrations and ecosystem (15%), UX and performance (10%), security and trust (10%), plus support and community (10%).

To keep this list practical, we favor tools that shorten a cycle. That could mean “insights overnight,” “notes in under a minute,” or “a pentest report in days.” We also punish hidden friction. Opaque pricing, heavy change management, and thin integrations can turn a good model into a stalled rollout.

Scores are editorial. They reflect what a smart buyer can reasonably expect, based on published limits, typical deployment patterns, and how clear the product feels. When pricing is quote-based, we say so. If a number is an estimate from third parties, we label it that way.

1. Listen Labs

Listen Labs positions itself as an AI-led research shop you can spin up on demand. The team frames the product around research craft, not just automation. Its flow starts with a study, then recruitment, then AI-moderated interviews, then an executive-ready report.

Tagline: Overnight interviews that land as decisions, not transcripts.

Best for: a solo product marketer; a PM team that needs weekly customer proof.

- AI-moderated interview pipeline → get themes and clips without scheduling chaos.

- Recruiting + reporting in one flow → saves 2 handoffs between ops and analysis.

- Study-to-report workflow → time-to-first-value can be same day for a simple study.

Pricing & limits: From $0/mo to start via “Try for Free”; paid pricing is not posted. One third-party guide cites roughly $85–$150 per completed interview for some enterprise quotes. Trial length and caps are not clearly published.

Honest drawbacks: If you need tight control over methodology, AI moderation may feel risky. Pricing opacity can also block procurement until late. Beats traditional panels on speed; trails a dedicated research team on nuance.

Verdict: If you need customer reality fast, this gets you usable insights in 24–48 hours.

Score: 3.6/5

2. Gladia

Gladia is a speech-to-text company selling to builders who care about latency and cost. The team leans into developer ergonomics, with docs, a playground, and clear concurrency limits. Pricing is unusually transparent for voice infrastructure.

Tagline: Ship transcription that stays fast, multilingual, and budgetable.

Best for: an AI voice-agent team; a contact-center platform PM.

- Real-time and async STT in one vendor → reduce vendor sprawl and rework.

- Free tier + self-serve rates → saves days of sales calls for early prototypes.

- Published concurrency limits → time-to-first-value can be under an hour for an API POC.

Pricing & limits: From $0.75/hour real-time and $0.61/hour async, plus 10 hours free per month on self-serve. Self-serve includes 30 real-time and 25 async concurrent requests. Enterprise adds items like zero data retention and SLAs.

Honest drawbacks: “Hours” pricing is great, yet forecasting can still be messy at scale. Some enterprise controls sit behind sales. Beats DIY Whisper hosting on simplicity; trails a fully bespoke ASR stack on custom tuning.

Verdict: If you need reliable STT quickly, this gets you production-grade transcripts in days, not quarters.

Score: 4.0/5

3. Rilla

Rilla sells AI coaching for field sales, with a clear bias toward “coach faster” moments. The team’s messaging centers on post-call analysis and manager workflows, including hands-free coaching. It’s built for operators who live in a car, not in a dashboard.

Tagline: Turn ride-time into coaching that actually sticks.

Best for: a home-services sales manager; a regional VP running distributed reps.

- Hands-free coaching mode → give feedback while driving, not after hours.

- Conversation analysis + assistant Q&A → typically saves a full note-taking pass per call.

- Manager-first UX → time-to-first-value can be one week with a small team rollout.

Pricing & limits: From $0/mo to start via a demo flow; public pricing is not listed. A third-party pricing breakdown estimates a $20,000+ annual minimum and roughly $4,000+ per user per year, with annual contracts. Trial terms are unclear.

Honest drawbacks: Budget can be a deal-breaker for small teams. Some buyers also dislike “insight latency” after recordings end, per third-party commentary. Beats generic call recorders at coaching flow; trails low-cost tools on accessibility.

Verdict: If you need reps improving weekly, this helps you systematize coaching within a month.

Score: 3.6/5

4. Blossom

Blossom is a virtual mental health provider blending therapy and medication management. The team emphasizes insurance coverage and easier access, which matters more than “AI” in this category. The product promise is simple: remove friction between need and care.

Tagline: Get to treatment without turning scheduling into a second job.

Best for: an insured patient who wants virtual psychiatry; a busy professional seeking ongoing support.

- Insurance-accepted psychiatric care → reduce out-of-pocket surprise for many patients.

- Virtual visits + targeted meds → saves commute time and coordination steps.

- Simple onboarding → time-to-first-value can be days, depending on scheduling availability.

Pricing & limits: From $0/mo to get started; patient cost is per visit. Blossom states most patient copays are $0–$22 and that it accepts most major insurances. Trial terms do not apply like SaaS.

Honest drawbacks: Insurance-driven billing can still surprise you if deductibles reset. Availability depends on provider scheduling in your state. Beats marketplace directories on guided flow; trails a long-term local clinician on continuity.

Verdict: If you want covered virtual care, this helps you start treatment within weeks, not months.

Score: 3.5/5

5. Omnea

Omnea is building procurement orchestration with an AI-native intake front door. The team focuses on governance that users will actually follow, which is the hardest procurement problem. It reads like a platform meant to unify intake, approvals, supplier data, and PO automation.

Tagline: Make every purchase request traceable, approvable, and fast.

Best for: a mid-market procurement lead; a finance ops team drowning in ad hoc spend.

- Conversational intake + structured workflows → reduce back-and-forth and missing details.

- ERP-linked PO automation → saves 2–3 manual re-entry steps per purchase request.

- Single “source-to-pay” hub → time-to-first-value can be 2–6 weeks with focused scope.

Pricing & limits: From $0/mo to start via a demo; pricing is not published publicly. Trial length is not listed. Usage caps likely depend on intake volume, workflows, and integrations.

Honest drawbacks: Enterprise procurement rollouts can stall on internal politics. If you need only AP automation, this may feel like a bigger platform than required. Beats lightweight forms on governance; trails best-of-breed suites on deep niche modules.

Verdict: If you need spend control without user revolt, this helps you enforce process within one quarter.

Score: 3.8/5

6. Adaptive Security

Adaptive Security sells security awareness as something people won’t hate. The team leans into modern threats, including deepfakes and multichannel phishing simulations. It’s positioned for organizations that want behavior change, not checkbox training.

Tagline: Train humans like they’re part of the security perimeter.

Best for: an IT director at an SMB; a security lead fighting social engineering risk.

- Deepfake and multichannel simulations → expose real weaknesses before attackers do.

- Employee-based licensing model → saves procurement cycles versus per-module pricing.

- Program-style rollout → time-to-first-value can be 1–2 weeks after content setup.

Pricing & limits: From $0/mo to start with a quote; pricing is custom and scales by employee count. Trial length is not listed publicly. Usage caps depend on the tier and program scope.

Honest drawbacks: Custom pricing slows fast “buy now” decisions. Buyers who want fully self-serve onboarding may feel blocked. Beats generic LMS training on realism; trails budget tools on sticker price.

Verdict: If you want fewer security mistakes, this helps you harden behavior in a few weeks.

Score: 4.0/5

7. Avoca

Avoca pitches itself as an “AI workforce” for service businesses, not a chatbot. The team anchors on revenue outcomes: booking leads, nurturing customers, and coaching staff. Deep ServiceTitan integration is a key part of the story.

Tagline: Answer every lead, then book more jobs without extra headcount.

Best for: a home-services operator; an SMB support leader on after-hours coverage.

- AI inbound responder across calls and chats → stop missed leads and lost weekends.

- CRM and ServiceTitan-style integrations → save 2 manual logging steps per interaction.

- Operator-friendly onboarding → time-to-first-value can be a week with tight scripts.

Pricing & limits: From $0/mo to start via a demo; public pricing is not listed. Trial length and usage caps are not published on the main site pages. Expect pricing to depend on channels, volume, and integrations.

Honest drawbacks: Without clear posted pricing, ROI math starts later than it should. Voice automation can also demand careful tuning to avoid brand damage. Beats basic answering services on consistency; trails a live team on edge-case empathy.

Verdict: If you want more booked work, this helps you capture demand within 30–60 days.

Score: 3.8/5

8. Traba

Traba is a labor marketplace with a worker-first mobile experience. The team’s “product” is flexibility: browse shifts, accept work, and track it in-app. For operators, the real outcome is staffing coverage without endless recruiting.

Tagline: Fill shifts fast, and let workers choose the schedule.

Best for: an hourly worker seeking flexible shifts; an ops manager handling seasonal spikes.

- Shift marketplace model → reduce time spent sourcing last-minute coverage.

- In-app matching and tracking → saves a few coordination messages per shift.

- Mobile-first UX → time-to-first-value can be same day for workers in active markets.

Pricing & limits: From $0/mo for workers; the iOS app is listed as free. Business-side pricing is not shown in the App Store listing. Trial length is not relevant for workers, and marketplace availability varies by location.

Honest drawbacks: Marketplace liquidity is uneven by region. Workers may face waitlists for popular shifts. Beats traditional temp agencies on speed; trails direct hiring on long-term retention.

Verdict: If you need flexible labor, this helps you cover shifts within days, sometimes hours.

Score: 3.4/5

9. Harmonic

Harmonic is aiming at formal mathematical reasoning, not casual “math help.” The team’s differentiator is correctness, with outputs designed to be verifiable. In practice, that means fewer hallucinations when precision is the whole point.

Tagline: Get math you can trust, not math that “sounds right.”

Best for: an applied research team; an engineering org that needs formal proofs.

- Formal reasoning orientation → reduce time lost validating shaky model answers.

- Platform plus upcoming API direction → saves custom infra work for early adopters.

- Research-to-product trajectory → time-to-first-value depends on access and use case fit.

Pricing & limits: From $0/mo to start via access requests; public end-user pricing is not clearly posted on the main site. Trial length is not listed. Usage caps likely depend on plan and API availability.

Honest drawbacks: This category can feel “too advanced” for most business users. Integration into everyday workflows may be immature versus general LLM tools. Beats general chat models on rigor; trails them on breadth and convenience.

Verdict: If you need provable reasoning, this helps you validate critical math within weeks of adoption.

Score: 3.3/5

10. Ambience Healthcare

Ambience Healthcare is an ambient clinical documentation company built for health systems. The team’s north star is clinician time back, with tight EHR integration as the unlock. It’s designed to feel like a workflow layer, not a separate app.

Tagline: Finish notes faster, without turning visits into typing sessions.

Best for: a health system CMIO; a multi-specialty group rolling out scribes at scale.

- Ambient note generation → reduce after-hours documentation and note backlog.

- EHR-embedded approach → saves 1–2 copy-paste loops per encounter.

- Enterprise deployment motion → time-to-first-value is often weeks, not days.

Pricing & limits: From about $233–$267 per provider per month for core usage, based on analyst estimates of $2,800–$3,200 per provider annually. Public list pricing is not posted. Trial length and caps are negotiated for enterprise contracts.

Honest drawbacks: Enterprise rollout means IT coordination and change management. If you want self-serve, this is not that. Beats human scribe staffing on scalability; trails a great human scribe on bedside nuance.

Verdict: If you want fewer burned-out clinicians, this helps you cut documentation time within one quarter.

Score: 4.1/5

11. Tennr

Tennr is built around a painfully specific job: turning messy referrals into scheduled, payable visits. The team sells “fax to first visit” as the journey, with automation that extracts, checks, and routes documentation. It’s revenue ops, dressed as patient experience.

Tagline: Convert more referrals by making intake payor-ready.

Best for: a specialty clinic ops lead; a revenue cycle leader fighting denials.

- Referral intake automation → reduce delays caused by missing or misread documents.

- Structured extraction from unstructured records → saves a manual review pass per referral packet.

- Pipeline-style workflow → time-to-first-value can be 2–6 weeks with tight scope.

Pricing & limits: From $0/mo to start via sales conversations; public pricing is not posted. Trial length is not listed publicly. Caps likely depend on referral volume, sites, and integrations.

Honest drawbacks: You will need process discipline, or automation just moves chaos faster. Integration work may be non-trivial for legacy intake stacks. Beats generic OCR on workflow outcomes; trails custom-built internal tooling on perfect fit.

Verdict: If denials and delays are killing growth, this helps you tighten intake within a few months.

Score: 3.7/5

12. XBOW

XBOW is a security product that frames pentesting like a repeatable, on-demand service. The team pushes a bold guarantee: “exploit-validated finding or you don’t pay” for Lightspeed tests. It’s aimed at teams that need coverage without waiting on calendars.

Tagline: Get real pentest depth on a software release timeline.

Best for: a startup security lead; a compliance-driven SaaS team shipping weekly.

- On-demand autonomous pentest → catch exploitable issues before audits and launches.

- Fast audit-ready reports → saves weeks of back-and-forth with external testers.

- Clear packaging by “per test” → time-to-first-value can be under a week.

Pricing & limits: From $4,000 per test for Plus and $8,000 per test for Premium. Lightspeed reports are positioned as delivered within 5 business days after testing begins. Enterprise is quote-based for continuous coverage.

Honest drawbacks: Per-test pricing can sting if you need constant coverage. Mobile and standalone API testing are described as “coming in 2026,” so scope may be web-first today.

Verdict: If you need defensible security fast, this helps you ship safer releases within days.

Score: 3.8/5

13. OpenRouter

OpenRouter is an aggregation layer for model access, routing, and spend controls. The team’s value is optionality: pick models, manage keys, and control budgets in one place. It’s infrastructure for teams who want leverage, not loyalty.

Tagline: Build with many models, pay once, and stay in control.

Best for: an indie builder shipping an AI feature; a platform team managing model spend.

- Unified API across providers → swap models without rewriting integrations.

- Budgets and activity logs → saves hours chasing cost spikes after the fact.

- Fast start with free tier → time-to-first-value can be under 30 minutes.

Pricing & limits: From $0/mo on the free tier with a 50 requests/day limit. Pay-as-you-go uses credits with pass-through provider pricing, plus a fee when purchasing credits. Credits may expire after one year per the FAQ.

Honest drawbacks: You still inherit provider outages and quirks. Security teams may prefer direct vendor contracts for audit simplicity. Beats single-provider lock-in on flexibility; trails bespoke infra on fine-grained control.

Verdict: If you need model choice without chaos, this helps you ship and iterate within days.

Score: 4.2/5

14. Harvey

Harvey is built for legal work, with a posture that says “enterprise by default.” The team’s ambition is broad legal acceleration: research, drafting, and analysis. It’s not trying to be a cheap add-on. It’s trying to be the system lawyers reach for first.

Tagline: Move legal work from hours to drafts, with fewer dead ends.

Best for: a large law firm practice group; an in-house legal ops team at scale.

- Legal-native AI workflows → reduce first-draft time across research and review.

- Enterprise procurement motion → saves shadow-IT risk and tool sprawl.

- High-touch rollout → time-to-first-value is often measured in months, not days.

Pricing & limits: From $0/mo to begin evaluation via sales; public pricing is not posted. One third-party review claims an estimated $24,000/month minimum based on seat minimums, with no free trial listed. Treat figures as directional.

Honest drawbacks: Cost and minimums can exclude smaller teams immediately. If you need simple CLM, a specialist tool may fit better. Beats general LLMs on legal framing; trails them on price and experimentation speed.

Verdict: If you run high-volume legal work, this helps you standardize output within a quarter or two.

Score: 3.5/5

15. Vivodyne

Vivodyne is a biotech company combining tissue engineering, robotics, and AI models. The team’s core bet is “preclinical certainty,” using human-relevant systems earlier in drug development. That makes it less like software and more like a platform partnership.

Tagline: Get better human data before you gamble on clinical trials.

Best for: a translational science leader; a biotech partnering team hunting higher-signal assays.

- Scalable human tissue platforms → reduce dependence on animal proxies in early decisions.

- Robotics + AI modeling combo → saves cycles by running more experiments per program.

- Partnership-style engagement → time-to-first-value is often months, tied to study design.

Pricing & limits: From $0/mo to start a conversation; pricing is not published and likely varies by partnership scope. Trial length is not listed. Capacity, timelines, and deliverables depend on program design.

Honest drawbacks: This is not plug-and-play. Budget and lead times can be significant, since the “product” includes lab execution. Beats standard CRO work when you need novel human models; trails simpler assays on turnaround.

Verdict: If you need stronger preclinical signals, this helps you de-risk programs over a quarter or two.

Score: 3.2/5

16. Abridge

Abridge sits in the same ambient documentation universe as other clinical AI scribes. The team frames the product around health-system deployment, especially with EHR integration. The promise is fewer clicks and cleaner notes, without adding another burden.

Tagline: Turn conversations into clinical notes clinicians will actually sign.

Best for: a hospital innovation lead; a specialty group standardizing documentation quality.

- Ambient note creation → reduce after-visit charting and burnout risk.

- Enterprise EHR integration posture → saves repeated copy-paste into templates.

- Standardized deployment model → time-to-first-value is often measured in months.

Pricing & limits: From an estimated $200–$600 per clinician per month, per a third-party comparison, with enterprise contracts. Another analyst source estimates about $2,500 per clinician per year. Public list pricing is not posted.

Honest drawbacks: If you are a small practice wanting self-serve, enterprise sales is heavy. Integrations can constrain speed if IT is overloaded. Beats generic dictation on structure; trails small tools on time-to-start.

Verdict: If you want system-wide documentation relief, this helps you see measurable impact within one quarter.

Score: 4.1/5

17. Glean

Glean is an enterprise “Work AI” platform built around search, assistants, and agents across company data. The team sells a vision of one place to ask, find, and act. It’s meant to reduce the daily tax of “where is that doc?”

Tagline: Find answers across the company, then turn them into actions.

Best for: a large IT team enabling knowledge access; a revenue org tired of context switching.

- Cross-app enterprise search → reduce time spent hunting internal information.

- Connector-driven ecosystem → saves repeated re-indexing and manual knowledge curation.

- Enterprise rollout model → time-to-first-value can be 4–8 weeks with key connectors.

Pricing & limits: From $0/mo to start via demo; public list pricing is not posted. A third-party guide estimates about $45–$50+ per user per month, with potential add-ons for AI features and annual minimums. Treat as directional.

Honest drawbacks: Per-seat pricing can punish “light users.” Search quality lives or dies on connector coverage and permission hygiene. Beats DIY search on UX; trails specialized tools on single-domain depth.

Verdict: If you need faster knowledge retrieval, this helps you reduce search time within one quarter.

Score: 4.0/5

18. Prepared

Prepared is built for public safety answering points, where the work is noisy, high-stakes, and overloaded. The team’s framing is “assistive AI,” with a single-screen view that pulls in multimedia and summarizes chaos. It’s designed to help humans hear more, not do less caring.

Tagline: Catch details in emergencies when humans can’t afford to miss them.

Best for: a 911 director modernizing operations; a supervisor improving call-taking consistency.

- AI insights and summaries → reduce missed details under stress.

- Multimedia intake readiness → saves switching between separate tools and screens.

- Vendor-agnostic integration posture → time-to-first-value can be weeks with scoped deployment.

Pricing & limits: From $0/mo to start via sales; pricing is not posted publicly. Trial length is not listed. Limits likely depend on call volume, media types, and integrations.

Honest drawbacks: Government procurement cycles are slow, even when the product is strong. AI in emergency response demands careful policy and training. Beats legacy CAD add-ons on modern media handling; trails simpler tools on procurement ease.

Verdict: If you need clearer call handling, this helps you standardize response quality within a few months.

Score: 3.8/5

19. Mashgin

Mashgin is building AI-powered checkout hardware meant to make lines optional. The team markets speed as the killer feature, with a “place items and go” interaction. It’s a retail ops tool that changes throughput, not just UI.

Tagline: Move more customers through checkout in seconds.

Best for: a stadium or venue operator; a convenience retailer optimizing labor and speed.

- Camera-based item recognition → reduce scanning friction and cashier intervention.

- Fast item onboarding claims → saves repeated catalog setup steps for new inventory.

- Hardware-first deployment → time-to-first-value can be weeks after install and training.

Pricing & limits: From $0/mo to start with sales; public pricing is not posted. Mashgin describes performance claims like 5-second checkout and item recognition accuracy, but costs are quote-based. Trial length and caps depend on deployment terms.

Honest drawbacks: Hardware rollouts require on-site ops coordination. Edge cases still exist, and exception handling matters. Beats traditional self-checkout on speed claims; trails staffed checkout on complex customer situations.

Verdict: If you need throughput, this helps you reduce lines within one rollout cycle.

Score: 4.0/5

20. Grammarly

Grammarly is no longer “new,” yet it remains a practical AI writing layer for daily work. The team’s edge is distribution across apps and a polished feedback loop. It’s less about magic prompts and more about consistent micro-improvements.

Tagline: Write clearer, faster, and with fewer embarrassing mistakes.

Best for: a busy individual contributor; a small team standardizing tone and quality.

- Real-time writing support → reduce revision cycles and last-minute fixes.

- Cross-app presence → saves constant copy-paste into separate AI tools.

- Low learning curve → time-to-first-value is minutes after installing or logging in.

Pricing & limits: From $0/mo for Free. Pro is $30/member/month monthly, or $12/member/month billed annually, per Grammarly’s pricing help. Pro includes a stated allowance of 2,000 AI prompts. Pro supports up to 149 seats.

Honest drawbacks: Suggestions can be stylistically wrong, even when grammar is right. Some teams will balk at sending sensitive text to any cloud tool. Beats generic chat on inline UX; trails a human editor on voice and intent.

Verdict: If you want consistently cleaner writing, this helps you improve output the same day.

Score: 4.4/5

21. 6sense

6sense is a revenue intelligence platform that blends data, intent, and workflow for sales. The team’s pitch is “prospect more efficiently,” with predictive AI in the loop. It’s built for pipeline creation, not just dashboards.

Tagline: Focus reps on accounts most likely to convert.

Best for: a B2B SDR team; a sales ops leader standardizing targeting.

- Account and contact insights → reduce wasted outreach to cold, wrong-fit accounts.

- Free tier entry point → saves a procurement cycle for initial testing.

- Workflow and alerts → time-to-first-value can be under a week for basic prospecting.

Pricing & limits: From $0/mo on the Sales Intelligence Free tier with 50 data credits per month. Paid pricing for broader platform modules is typically quote-based and not fully posted on this page. Trial length is not stated here.

Honest drawbacks: The “full” value usually requires clean CRM data and tight process. Many orgs underuse it after the initial excitement. Beats list vendors on prioritization; trails simpler tools on onboarding complexity.

Verdict: If you want higher-quality outbound, this helps you tighten targeting within a month.

Score: 3.8/5

22. Checkr

Checkr is a background check platform designed for hiring velocity and workflow integration. The team’s value is operational: run checks, track progress, and keep candidates moving. It’s a “less time waiting” product, which is the real metric.

Tagline: Clear candidates faster, without compliance chaos.

Best for: a high-volume recruiter; an ops team hiring hourly roles at scale.

- Tiered screening packages → match checks to roles and reduce over-screening.

- API and integrations posture → saves manual status chasing in ATS workflows.

- Self-serve for smaller volumes → time-to-first-value can be same day after signup.

Pricing & limits: From $29.99 per report for Basic, $54.99 for Essential, and $89.99 for Complete. Add-ons are priced per check, and continuous criminal search is listed at $1.70 per individual per month. Custom quotes apply for 300+ checks per year.

Honest drawbacks: Per-report pricing can add up fast in churny roles. Support expectations should match a platform model, not a white-glove agency. Beats legacy screeners on self-serve clarity; trails boutique firms on concierge handling.

Verdict: If you need faster hiring clears, this helps you reduce delays within weeks.

Score: 4.0/5

23. Textio

Textio is an augmented writing tool aimed at hiring and feedback language. The team’s core job is reducing bias and improving clarity while you write, not after you post. It’s designed for teams that hire a lot and can’t afford inconsistent messaging.

Tagline: Write job posts that attract the right people, faster.

Best for: a recruiting operations lead; an HR team standardizing job description quality.

- Augmented writing guidance → reduce rewrites and improve posting consistency.

- Annual contract model → saves piecemeal tool stacking across recruiting teams.

- Docs-like workflow → time-to-first-value can be a day once access is provisioned.

Pricing & limits: From about $2,056/mo when annualized, based on Vendr’s reported median annual price of $24,677. Textio does not publish list pricing on its own site, and contracts vary. Trial length and caps are not publicly standardized.

Honest drawbacks: This can feel expensive for “just writing,” unless hiring volume is high. Teams can also over-optimize scores instead of substance. Beats generic AI on HR-specific guidance; trails free tools on budget friendliness.

Verdict: If you want better job posts at scale, this helps you improve language quality within weeks.

Score: 3.7/5

24. Dataiku

Dataiku is an enterprise AI platform that tries to unify analytics, ML, and governance. The team sells “universal” collaboration across technical and less-technical users. In practice, it’s a control plane for models, data, and now GenAI projects.

Tagline: Build, govern, and deploy AI without duct-taping ten tools.

Best for: an enterprise data science team; a platform leader standardizing AI delivery.

- End-to-end platform scope → reduce toolchain sprawl across teams.

- Free Edition and cloud trial → save weeks before committing budget.

- Cloud trial “ready in minutes” → time-to-first-value can be same day for demos.

Pricing & limits: From $0/mo with the Free Edition, limited to up to 3 users and without deployment and governance features. Dataiku also offers a 14-day Dataiku Cloud trial with limited users. Paid editions are contact-sales.

Honest drawbacks: Platform breadth can overwhelm teams that just need notebooks. Real success still requires strong data engineering and governance discipline. Beats DIY stacks on governance; trails lighter tools on setup simplicity.

Verdict: If you need enterprise AI standardization, this helps you operationalize projects within one quarter.

Score: 4.0/5

25. AlphaSense

AlphaSense is an enterprise intelligence and research platform built for fast, credible discovery. The team targets analysts who live on transcripts, filings, and internal knowledge. The value is speed-to-insight when the question is expensive to answer wrong.

Tagline: Find the signal in markets and competitors before the meeting starts.

Best for: a strategy analyst; an investment research team working under deadlines.

- AI search across premium content → reduce time spent skimming long documents.

- Enterprise subscription posture → saves patchwork subscriptions across teams.

- Analyst-friendly UX → time-to-first-value can be same week after onboarding.

Pricing & limits: From about $1,458/mo when annualized, based on Vendr’s reported median annual price of $17,500. AlphaSense itself states pricing is via annual subscriptions and requires contacting the team. Trial length and caps are contract-based.

Honest drawbacks: Cost can be hard to justify for occasional research. You may also pay for content packages your team underuses. Beats general web search on depth; trails cheaper tools on accessibility.

Verdict: If you need faster research, this helps you answer stakeholder questions in hours, not days.

Score: 4.0/5

26. Labelbox

Labelbox is an annotation and data operations platform aimed at teams training models responsibly. The team’s core product is workflow: manage labeling, quality, and now model-assisted labeling economics. It’s about throughput without trash data.

Tagline: Turn raw data into training-ready datasets without losing control.

Best for: an applied ML team; a computer vision lead scaling labeling operations.

- LBU-based platform limits → reduce surprise constraints as teams scale.

- Foundry and add-ons model → saves manual pre-labeling steps when using model runs.

- API-first posture → time-to-first-value can be a day for a small pilot dataset.

Pricing & limits: From $0/mo on Free with 500 LBUs free each month. Starter is listed with a fixed rate of $0.10 per LBU, and Enterprise removes LBU limits. Trial length is not described in these limit docs.

Honest drawbacks: Cost forecasting can be tricky if you do not track LBU drivers. Workflow complexity is real, and teams need process ownership. Beats ad hoc labeling on governance; trails simple spreadsheets on learning curve.

Verdict: If you need scalable labeling ops, this helps you ship datasets reliably within weeks.

Score: 4.0/5

27. Ironclad

Ironclad is a contract lifecycle management platform with AI layered into legal workflows. The team’s bet is that legal teams want speed, but also guardrails. Done well, CLM is less “software” and more “time returned to the business.”

Tagline: Get contracts out the door without losing legal control.

Best for: an in-house legal team; a procurement org standardizing approvals.

- CLM workflow standardization → reduce cycle time from request to signature.

- API and integration posture → saves duplicate data entry across CRM and ERP tools.

- Guided deployment options → time-to-first-value is often 2+ months for full rollout.

Pricing & limits: From $0/mo to start via sales; Ironclad does not list public prices on its pricing page. A third-party review estimates around $5,000/month for some contracts, with annual minimums. Treat as directional.

Honest drawbacks: Implementation effort is real, especially with clause libraries and approvals. Smaller teams may not get ROI fast enough. Beats email-driven contracting on auditability; trails lightweight e-sign on simplicity.

Verdict: If contracts are slowing revenue, this helps you shorten cycle time within one or two quarters.

Score: 3.9/5

28. Fictiv

Fictiv is a manufacturing sourcing platform that wraps a managed supplier network with software visibility. The team’s angle is reducing risk and complexity, especially when supply conditions change fast. It’s a procurement and engineering tool wearing an operations badge.

Tagline: Source custom parts with fewer surprises and less back-and-forth.

Best for: a hardware startup buyer; an engineering team juggling multiple suppliers.

- Managed partner network → reduce time vetting and coordinating manufacturers.

- Preferred pricing programs for teams → saves repeated negotiation cycles at volume.

- Software-guided quoting flow → time-to-first-value can be days for a first quote.

Pricing & limits: From $0/mo to start; pricing is primarily per part and per order, not a simple subscription. Preferred Pricing is negotiated based on volume and engagement length. Trial length is not framed like SaaS.

Honest drawbacks: You may have less control over individual supplier choice than direct sourcing. Lead times still depend on real-world manufacturing constraints. Beats cold sourcing on reliability; trails a dedicated procurement team on bespoke negotiation.

Verdict: If you need parts without sourcing chaos, this helps you move from CAD to order within weeks.

Score: 3.8/5

29. Akkio

Akkio is positioning as an AI analytics platform aimed at media agencies, built around domain-specific agents. The team emphasizes speed, especially in audience creation and exploration. It reads like a bid to turn analytics into a conversational, repeatable workflow.

Tagline: Go from campaign questions to usable answers in minutes.

Best for: a media agency strategist; an analytics lead supporting multiple client teams.

- Agency-specific agents → reduce time building repeat analyses from scratch.

- Customization and integrations focus → saves manual exports and reformatting across tools.

- Platform-style deployment → time-to-first-value can be a few weeks for real client workflows.

Pricing & limits: From $0/mo to start a sales conversation; pricing is explicitly described as custom. Trial length is not published on the pricing page. Usage caps and support terms depend on contract scope.

Honest drawbacks: Custom pricing slows small teams that want quick self-serve. If your agency lacks clean data plumbing, “AI” will not fix fundamentals. Beats generic BI on agency framing; trails self-serve tools on immediate purchase.

Verdict: If you want faster client-ready analytics, this helps you compress timelines within one quarter.

Score: 3.8/5

30. ZeroEyes

ZeroEyes is a security company using computer vision for gun detection on existing camera systems. The team emphasizes proactive detection and human verification, plus a no-facial-recognition stance. It’s built for schools and public venues that need faster alerts without more hardware.

Tagline: Detect a visible firearm fast, then alert the right people.

Best for: a school district security lead; a venue operator upgrading safety without checkpoints.

- Camera-stream-based detection → reduce need for new entrance hardware deployments.

- Pricing tied to streams → saves lengthy per-device re-scoping during expansion.

- Install on existing cameras → time-to-first-value can be weeks after planning and setup.

Pricing & limits: From less than $400/year per detection point, per the pricing page. ZeroEyes also states most clients pay less than $60 per camera stream each month, with discounts for larger counts. Trial length and exact caps depend on camera scale and contract length.

Honest drawbacks: Performance depends on camera placement and coverage planning. This detects visible brandished guns, not concealed weapons, so it is not a full security strategy. Beats metal detectors on frictionless flow; trails staffed screening on certain threat types.

Verdict: If you need faster situational awareness, this helps you extend safety coverage within one deployment cycle.

Score: 3.8/5

Trends defining the next wave of hottest ai startups

1. Explosion of AI-native companies built with AI at the core

AI-native means the product cannot exist without models. It also means the company runs itself with AI. We now see startups using agents for support, QA, and internal research. That changes cost structure and speed.

In our infrastructure telemetry, AI-native teams behave differently. They ship more experiments and more small services. They also demand better observability. When every feature touches inference, monitoring becomes product.

2. Lower barriers to building AI products with modern tools, frameworks, and cloud access

Barriers fell because the stack matured. Model APIs, open weights, and hosted vector search are accessible. That democratizes prototypes. It also increases competition.

The winners handle the new bottleneck. The bottleneck is not training. The bottleneck is data quality, evaluation, and integration. Teams that treat those as first-class ship faster, with fewer rollbacks.

3. Vertical AI specialization across healthcare, finance, logistics, and legal

Vertical AI wins because context is everything. A general assistant can draft. A vertical assistant can comply. It knows the forms, the edge cases, and the escalation paths.

We see this in deployment architecture. Vertical teams invest in auditability. They log evidence, citations, and tool actions. That matters in regulated workflows. It also builds buyer confidence.

4. Shift from AI tools to AI coworkers: agents, copilots, and autonomous systems

Tools answer questions. Coworkers complete tasks. That shift changes the security model. An agent needs permissions, not just prompts. It also needs constraints, approvals, and rollback paths.

We like startups that treat agents as “bounded automation.” They define allowed actions and safe toolchains. They also ship human-in-the-loop review. That keeps autonomy useful, not reckless.

5. Faster go-to-market cycles with rapid launches and iterations

Launch cycles compressed because distribution is now software-first. Teams ship a web app, then add APIs. Marketing happens in product. Usage becomes the story.

Speed has a cost. Fast cycles can create compliance debt. Hot startups manage that debt early. They build permissioning, tenant isolation, and clear data retention policies.

6. Enterprises testing startups earlier to accelerate adoption and visibility

Enterprises used to wait. Now they pilot early, then expand quickly. Procurement still slows things, yet pilots start anyway. Innovation teams often run “shadow trials.”

Startups that win these trials simplify deployment. They support SSO, SCIM, and least-privilege access. They also provide security artifacts without drama. That reduces buyer friction.

7. Hardware meets intelligence: infrastructure pressure from larger models and scaling demands

Model capability often implies more compute. That creates pressure on latency and cost. Operators care about batch inference, caching, and routing. Those details decide unit economics.

Infrastructure startups thrive in this gap. GPU cloud, model hosting, and inference optimization are now core categories. We also see new interest in “small, specialized” models. Efficiency is a feature.

8. Enterprise collaboration: co-building with startups to bridge legacy systems and innovation

Co-building is the new sales motion. Buyers want custom connectors and tool actions. Startups that offer professional services wisely can accelerate adoption. Still, services must feed product.

We favor teams that build extensibility. A plugin layer beats a one-off integration. Shared primitives also help partners contribute. That is how ecosystems form around a product.

9. Ethical AI and transparency: bias reduction, data auditing, and accountability expectations

Ethics became operational. Buyers now ask for eval methods, not slogans. They want red-team results, not marketing. Hot startups respond with test harnesses and policy controls.

Transparency also helps adoption. When users can see sources and tool actions, trust rises. Guardrails then feel like collaboration, not restriction. That is good product psychology.

10. Talent redistribution as researchers and engineers move from big tech to startups

Talent moved because the frontier is exciting. Researchers want agency and speed. Engineers want ownership of systems end-to-end. Startups provide both, if culture supports it.

For us, the tell is hiring shape. Strong teams hire ops early. They hire security earlier than before. That signals they plan to survive real customers, not just demos.

11. GenAI moving into production environments as enterprise adoption accelerates

Production is where “AI” becomes “software.” Latency budgets appear. Error budgets appear. Change management appears. That is healthy, even if it slows experimentation.

We see a consistent production pattern. Companies start with RAG, then add tools, then add agents. After that, evaluation becomes continuous. The hottest startups ship those steps as a path, not a pile.

Funding, valuation, and traction signals behind hottest ai startups

1. Ranking signals used by curated lists: valuation, funding, and growth

Curated lists are imperfect filters. Still, they reveal consensus attention. The best ones use multiple signals. Funding alone is noisy. Growth alone can be paid for.

We cross-check lists against operational reality. Are customers deploying beyond one team? Are security reviews passing? Are integrations stable? Those “boring” answers predict survival.

2. Funding stages as a shorthand for maturity: Seed through late-stage rounds

Stage is shorthand, not destiny. Seed teams can ship serious products. Late-stage teams can still be searching. Yet stage affects expectations. Buyers assume maturity from later rounds.

Operators should treat early-stage tools carefully. Use sandboxes, scoped permissions, and clear exit plans. Hot startups appreciate that rigor. It makes partnerships healthier.

3. Investor patterns shaping the hottest ai startups ecosystem

Investor patterns matter because they shape behavior. Some investors push “ship fast at any cost.” Others push compliance and durability. The cap table can influence product decisions.

We also track investor specialization. Model-layer investors differ from vertical SaaS investors. Infra investors differ from consumer investors. Fit between investor and motion reduces strategic thrash.

4. Corporate participation and strategic alignment with major cloud and AI platforms

Corporate participation can mean distribution. It can also mean constraints. When a startup aligns with a cloud marketplace, procurement is easier. When it aligns too tightly, optionality shrinks.

The strongest teams keep portability. They support multiple clouds or clean abstractions. They also design for data residency needs. That makes enterprise deals less fragile.

5. Channel, reseller, and partner programs as distribution accelerators

Channels matter when the buyer is risk-averse. SIs can provide political cover. Resellers can bundle procurement. Cloud partners can smooth billing. Each reduces adoption friction.

However, channels can dilute feedback loops. Hot startups protect the loop. They keep product teams close to users. They also measure implementation outcomes, not just booked revenue.

6. Traction indicators: early adoption, enterprise renewals, and real usage outcomes

Renewal behavior is the cleanest traction indicator. It signals the tool became a habit. It also signals the tool survived internal scrutiny. In enterprises, that is hard-earned.

We also like outcome evidence. Did cycle time drop? Did errors fall? Did throughput rise? Hot startups help customers measure. They build dashboards, not just features.

7. Hiring momentum as a proxy for scaling and go-to-market readiness

Hiring momentum is a signal of intent. It can also be a signal of stress. We prefer “right roles” over “more roles.” Security, infra, and customer success hires are often the healthiest clues.

We also watch leadership composition. Strong teams add experienced operators. They complement visionary founders with execution leaders. That mix tends to stabilize scaling phases.

8. From hype to product-market fit: what “hot” means after 2023 to 2024

Hype used to mean model demos. Now it means reliability under load. “Hot” is a startup that can keep SLAs while shipping new features. Buyers want predictable systems, not surprises.

We also see a shift in buyer questions. They ask about data boundaries and evals. They ask about incident response. Teams that answer crisply stand out. That is the new heat.

Hottest ai startups by category and real-world use case

1. Enterprise search and knowledge assistants for internal productivity

Enterprise search is where AI becomes daily productivity. Glean and Hebbia are strong reference points. Perplexity also influences expectations. Users now want answers with sources, not links.

The technical challenge is messy access control. Identity, group membership, and doc permissions must be exact. Retrieval also must be high precision. Otherwise, hallucinations become policy violations. Hot teams treat ACL-aware retrieval as core engineering.

2. Legal AI platforms and contract copilots for professional services

Legal is a perfect AI wedge. The work is text-heavy and precedent-driven. Harvey is the headline name. Spellbook brings copilot ergonomics to contracts. Many firms also build internal layers.

Great legal AI is not just drafting. It is issue spotting, clause comparisons, and negotiation support. Risk tolerance is low, so evaluation matters. We expect the hottest legal startups to ship review workflows and traceable reasoning paths.

3. Healthcare AI copilots for documentation and clinical workflows

Healthcare copilots win when they reduce clinician burden. Abridge and Ambience Healthcare sit in that stream. Hippocratic AI signals a broader “agent” ambition for care journeys. OpenEvidence shows how search can be clinical.

Deployment requires trust and integration. EHR workflows are strict. Privacy expectations are higher. The strongest teams build on-prem options or strict isolation. They also design for audit trails. In medicine, “why” is as important as “what.”

4. Speech-to-text, transcription, and voice interfaces as API-first products

Speech APIs are the quiet backbone of many AI apps. Deepgram is a key infrastructure player. AssemblyAI also competes in developer-first voice. These products succeed when latency and accuracy hold under real noise.

We like voice startups that expose controls. Phrase boosting, diarization, and domain adaptation matter. Voice is also a security vector. Hot teams support redaction and safe retention. That enables healthcare, finance, and support center deployment.

5. Cybersecurity and data security posture management powered by AI

Security is already data-heavy, so AI fits. Wiz dominates mindshare in cloud security posture. Cyera brings similar urgency to data posture. HiddenLayer targets model-layer threats that many teams still ignore.

AI changes the risk surface. Prompt injection is a new category. Data exfiltration paths multiply via tools. Hot security startups instrument the agent toolchain. They also map permissions and secrets. Security is where “boring coverage” beats clever demos.

6. Public safety and emergency response AI for call handling and triage

Public safety is an “agentic” category with consequences. Startups like Prepared and Carbyne push AI into dispatch workflows. RapidSOS is part of the broader emergency data layer. The value is faster triage and better routing.

Accuracy requirements are extreme. Systems must handle accents, stress, and incomplete information. Hot teams design conservative automation. They escalate to humans cleanly. They also log decisions for review. In this space, trust is a product feature.

7. Creative AI for video generation, 3D capture, and voice synthesis

Creative AI is where users notice progress immediately. Runway sets the pace in video workflows. Pika pushes social-first creation loops. Luma AI brings 3D capture into mainstream tools. ElevenLabs reshapes voice production.

For businesses, the key is brand safety and rights. Hot creative startups ship enterprise controls. They offer content filtering and governance. They also provide predictable output tools. Marketing teams want repeatability, not roulette.

8. Developer tools and coding assistants for building AI applications faster

Developer tools are the fastest distribution channel we know. Cursor (Anysphere) is the standout IDE-native experience. Replit blends environment and assistant. Codeium competes on speed and enterprise controls. Cognition adds agentic task execution.

Hot dev tools treat code as a system, not a file. They understand repos, tests, and deployments. They also integrate with CI and policy gates. The best ones reduce review load, not just typing. That is how productivity becomes organizational, not personal.

9. Back-office agents for procurement, finance, and operational automation

Back-office work is repetitive and tool-driven, so agents fit. Sierra focuses on customer-facing operations, yet the pattern generalizes. Many newer agent startups target ticket triage, refunds, and procurement workflows. The prize is fewer handoffs.

The core challenge is action safety. An agent that can initiate refunds needs approvals. An agent that can place orders needs constraints. Hot startups ship policy layers and step-by-step tool logs. They also support rollback patterns. Automation must be reversible.

10. Autonomous market research and customer insights platforms

Market research is shifting from “survey then analyze” to “monitor then synthesize.” Perplexity influences this behavior, even outside search. Several B2B startups now build agentic research desks. They watch sites, docs, and internal notes.

We like platforms that separate facts from interpretations. They show sources, timestamps, and confidence. Hot teams also support private corpora. That lets companies blend public signals with internal data. Insights become a workflow, not a quarterly event.

Where the hottest ai startups are being built: Silicon Valley and beyond

1. Silicon Valley as an AI hotbed fueled by talent density and big-tech adjacency

Silicon Valley stays hot because talent clusters compress feedback cycles. Engineers change jobs, carry lessons, and start companies. Buyers are nearby too. That creates fast pilots and fast product changes.

From our side, Bay Area startups also invest earlier in infra quality. They expect scale. They instrument services, set SLOs, and standardize deployment. That maturity helps them sell to larger enterprises sooner.

2. Bay Area AI company mix: enterprise automation, healthcare AI, and developer platforms

The Bay Area mix reflects immediate enterprise demand. Enterprise automation thrives because buyers need ROI. Healthcare AI thrives because the region blends tech and health systems. Developer platforms thrive because developers live there and share tools quickly.

We also see infra startups cluster locally. GPU clouds, vector databases, and eval platforms often appear near research talent. That proximity helps with hiring and partnerships. It also intensifies competition.

3. Silicon Valley startup examples: Moveworks, Tempus AI, Voyage, Cruise, Plus.ai

Examples show how “hot” evolves. Moveworks became a category leader, then acquired by ServiceNow as agentic AI moved into IT workflows. Tempus AI scaled healthcare data into a public-market story. Voyage built retrieval models, then acquired by MongoDB to sit closer to operational data.

Cruise is the reminder that autonomy is brutal. Strategy, regulation, and safety can reset timelines quickly. Plus.ai, now PlusAI, stays interesting because trucking autonomy has clearer economics. Physical AI is slow, yet it can be durable.

4. New York as a major center for enterprise AI, sales, and healthcare innovation

New York keeps rising as an AI center. The city has dense enterprise buyers. It also has finance, media, and healthcare institutions. That creates demanding early customers. Demanding customers can accelerate product maturity.

We also like New York’s go-to-market talent pool. Great sales leaders and customer success operators live there. AI startups need that talent. Distribution is often harder than modeling.

5. Global hotspots alongside the U.S.: Paris and London in curated AI startup lists

Paris and London show up repeatedly on curated lists. Europe produces strong research talent and practical enterprise builders. Regulatory pressure can also force better governance earlier. That becomes a selling point later.

We see more cross-Atlantic company shapes. A research hub might live in Europe. Sales might live in the U.S. Infrastructure then needs to support data residency. Hot startups plan that early instead of improvising later.

6. Remote-first teams and distributed HQ models for AI startups

Remote-first is normal now, yet it changes execution. Teams need strong written culture. They need disciplined incident response. They also need clear interfaces between research and product. Otherwise, velocity collapses.

Distributed teams also change infrastructure choices. Latency, region replication, and access control matter more. We see startups adopt multi-region patterns earlier. They also prefer managed services to reduce on-call load.

7. Using startup directories to source partnerships, talent, and competitive intel

Directories are not just for investors. Operators use them to find integrations. Recruiters use them to map talent. Product teams use them to track competitors. Used well, directories speed up decision-making.

We recommend triangulation. Pair directories with customer interviews and security reviews. Then add a technical proof of concept. That combination catches hype early. It also highlights “quietly great” teams before they become obvious.

1Byte cloud computing and web hosting for AI startup teams

1. Domain registration for AI startup brands, product launches, and landing pages

A domain is not branding fluff. It is the start of trust. It impacts email deliverability, phishing risk, and customer confidence. We advise founders to buy domains early and defensively. Typosquatting is cheap for attackers.

Launch pages should also be operational assets. They collect early leads, explain data handling, and route support. From our experience, the best AI startups publish clear privacy language early. That reduces friction when enterprise buyers appear.

2. SSL certificates to secure AI websites, dashboards, and customer-facing apps

SSL is table stakes, yet AI products raise the bar. Users paste sensitive content into prompts. Dashboards often expose transcripts and documents. Transport security is the baseline, not the finish line. We push teams to treat encryption as a default posture.

We also encourage certificate automation. Manual renewal is an outage waiting to happen. Hot startups automate it, then move on to the harder parts. Secrets management, access logging, and tenant isolation are where real security lives.

Leverage 1Byte’s strong cloud computing expertise to boost your business in a big way

1Byte provides complete domain registration services that include dedicated support staff, educated customer care, reasonable costs, as well as a domain price search tool.

Elevate your online security with 1Byte's SSL Service. Unparalleled protection, seamless integration, and peace of mind for your digital journey.

No matter the cloud server package you pick, you can rely on 1Byte for dependability, privacy, security, and a stress-free experience that is essential for successful businesses.

Choosing us as your shared hosting provider allows you to get excellent value for your money while enjoying the same level of quality and functionality as more expensive options.

Through highly flexible programs, 1Byte's cutting-edge cloud hosting gives great solutions to small and medium-sized businesses faster, more securely, and at reduced costs.

Stay ahead of the competition with 1Byte's innovative WordPress hosting services. Our feature-rich plans and unmatched reliability ensure your website stands out and delivers an unforgettable user experience.

As an official AWS Partner, one of our primary responsibilities is to assist businesses in modernizing their operations and make the most of their journeys to the cloud with AWS.

3. WordPress hosting, shared hosting, cloud hosting, and cloud servers with 1Byte as an AWS Partner

AI teams need a stack that matches their stage. WordPress hosting fits marketing sites and docs. Shared hosting can work for early landing pages. Cloud hosting and cloud servers become essential once APIs and dashboards ship. That is where uptime and scaling matter.

As an AWS Partner, we at 1Byte design paths that reduce rework. Founders can start simple, then graduate to isolated environments. Operators can add observability, backups, and security layers without a platform rewrite. If we are building an AI product this cycle, we would ask one question first. Which workflow must never go down, even during model chaos?